There has been widespread discussion about the potential impact of AI on society. While the UK Government has played an active role in shaping the conversation around the security and safety of AI, it has also demonstrated its intention to embrace AI where productivity and efficiency benefits may be found. And it’s widely recognised that many of the UK’s Government departments possess enormous data sets which, if carefully deployed, may have huge potential to train new AI models that can improve decision-making across the full spectrum of public life.

At the start of this year, the UK Cabinet Office and Central Digital & Data Office released the first ‘Generative AI Framework for His Majesty’s Government’. It is clear that Government departments in the UK, like enterprise businesses, recognise the transformative potential of AI, but there are understandable concerns about balancing any implementation with data security, especially when dealing with sensitive personal data.

Armed with this framework, we’re starting to see individual departments take very different approaches to generative AI: from the Department of Work & Pensions planning a blanket block of genAI, to the Home Office, which is exploring a test case with Microsoft Copilot. What is undeniable is that any government use cases for AI must guarantee that any implementation puts data security at its core.

Implementing the framework

To securely implement generative AI, an organisation–whether it is a public body or private business–should start by developing a clear policy that can be referred to when considering applications of AI. If possible, we want to automate the controls that enforce that policy so that we are not reliant on the personal interpretation or actions of individuals.

If we take the 10 common principles set out in the HMG framework to guide the safe, responsible, and effective use of generative AI in government organisations, there are two specific areas where the onus is put on the individual department to perform tasks. This leaves government departments open to a number of risks.

The first concerns evaluations and risk assessments. The reality is that many individuals will simply lack the skills and technical capabilities to effectively conduct risk assessments regarding AI use cases. This lack of a clear policy on the use of these tools could give rise to workers running uncontrolled experiments on how generative AI could support their work. And blanket bans are seen as obstructive and generally prompt workarounds. Left unchecked, this “shadow AI” can potentially result in the unapproved use of third-party AI applications outside of a controlled network and the inadvertent exposure of data, potentially with significant consequences.

Perhaps even more troubling is the reliance on human controls to review and validate the outputs and decision-making for AI tools. In this AI-augmented workplace, the sheer volume of data, implementations, and operations involved makes human control impossible. Instead, machine learning and AI itself should be deployed to counter the risk, spotting the data exfiltration attempts, categorising key data, and either blocking it or providing user coaching towards alternative, safer behaviours in the moment.

AI secure in the cloud

For security leaders in the public sector, the challenge is two-fold: how do I prevent data loss while simultaneously enabling the efficient operation of the department?

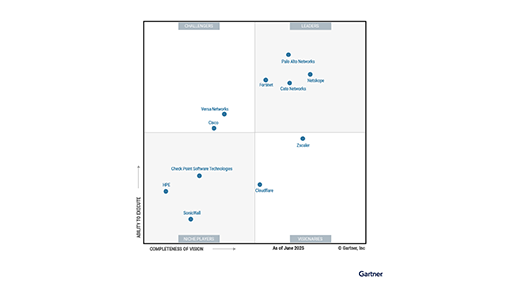

As the Government continues to transition to a cloud-first approach, we have a unique opportunity to design the security posture around security service edge (SSE) solutions. These tools allow us to both permit and block the use of generative AI applications in a granular way while also monitoring the data flows used by these apps. For example, by adopting data loss prevention (DLP) tools, departments will be able to identify and categorise sensitive data, and block instances where personal and sensitive data is being uploaded to these applications, ensuring compliance with necessary legislation and minimising data leakage. Alongside the prevention of data loss, DLP allows security teams to engage the workforce with targeted coaching in real time, to inform them of departmental policies around the use of AI and nudge them to preferred applications or behaviours in the moment.

Armed with the reassurance DLP brings, security leaders can comprehensively assess the implementation of new generative AI tools. It is vital when evaluating any software tool that you can get the visibility of the third- and fourth-party suppliers to understand how their tools leverage other AI models and providers. Through that visibility, you can allocate a risk score and therefore permit the use of tools deemed secure and block riskier applications. By being able to grant access to applications, you reduce the likelihood of teams circumventing security controls to use their preferred tools, helping prevent instances of shadow AI and potential data loss.

The path to AI-powered government

The UK Cabinet Office describes its generative AI framework as “necessarily incomplete and dynamic” and this is pragmatic. However, we already have the tools through SSE to both establish a secure posture and maintain the flexibility to adapt and implement new policies as technologies evolve. With the continued push to the cloud, government departments should look to consolidate their security posture to give the greatest visibility over their application infrastructure, while creating a secure and effective foundation on which to innovate and experiment with AI use cases.

With the foundational security infrastructure in place, government departments will be able to leverage the data they already have to build tools capable of improving government efficiency, and the quality of the delivery of services and introduce robust modelling to help shape policy that delivers on a better future.

If you are interested in learning more about how Netskope can help you safely adopt generative AI please see our solution here.

Voltar

Voltar

Leia o Blog

Leia o Blog